- cross-posted to:

- technology@beehaw.org

- cross-posted to:

- technology@beehaw.org

Text to avoid paywall

The Wikimedia Foundation, the nonprofit organization which hosts and develops Wikipedia, has paused an experiment that showed users AI-generated summaries at the top of articles after an overwhelmingly negative reaction from the Wikipedia editors community.

“Just because Google has rolled out its AI summaries doesn’t mean we need to one-up them, I sincerely beg you not to test this, on mobile or anywhere else,” one editor said in response to Wikimedia Foundation’s announcement that it will launch a two-week trial of the summaries on the mobile version of Wikipedia. “This would do immediate and irreversible harm to our readers and to our reputation as a decently trustworthy and serious source. Wikipedia has in some ways become a byword for sober boringness, which is excellent. Let’s not insult our readers’ intelligence and join the stampede to roll out flashy AI summaries. Which is what these are, although here the word ‘machine-generated’ is used instead.”

Two other editors simply commented, “Yuck.”

For years, Wikipedia has been one of the most valuable repositories of information in the world, and a laudable model for community-based, democratic internet platform governance. Its importance has only grown in the last couple of years during the generative AI boom as it’s one of the only internet platforms that has not been significantly degraded by the flood of AI-generated slop and misinformation. As opposed to Google, which since embracing generative AI has instructed its users to eat glue, Wikipedia’s community has kept its articles relatively high quality. As I recently reported last year, editors are actively working to filter out bad, AI-generated content from Wikipedia.

A page detailing the the AI-generated summaries project, called “Simple Article Summaries,” explains that it was proposed after a discussion at Wikimedia’s 2024 conference, Wikimania, where “Wikimedians discussed ways that AI/machine-generated remixing of the already created content can be used to make Wikipedia more accessible and easier to learn from.” Editors who participated in the discussion thought that these summaries could improve the learning experience on Wikipedia, where some article summaries can be quite dense and filled with technical jargon, but that AI features needed to be cleared labeled as such and that users needed an easy to way to flag issues with “machine-generated/remixed content once it was published or generated automatically.”

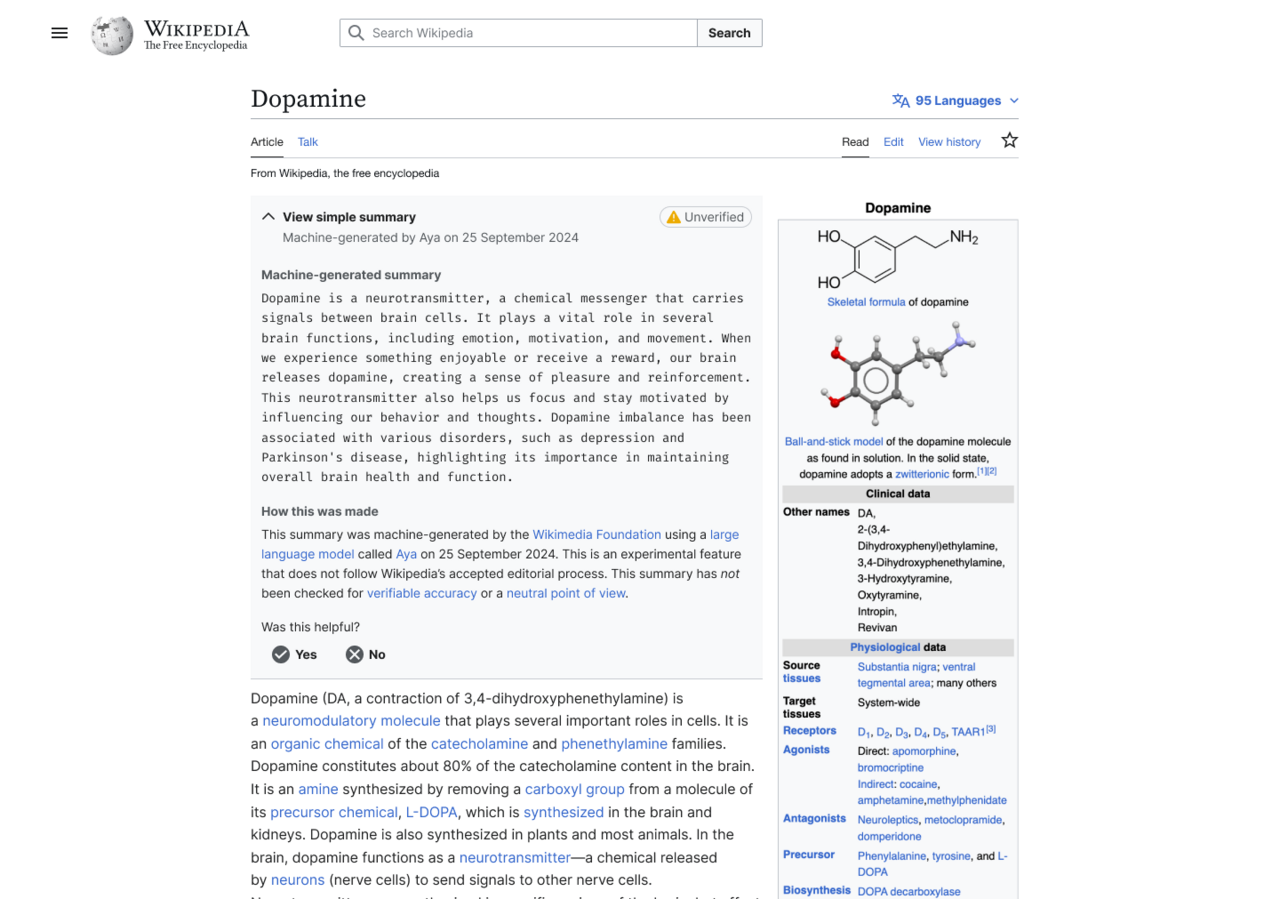

In one experiment where summaries were enabled for users who have the Wikipedia browser extension installed, the generated summary showed up at the top of the article, which users had to click to expand and read. That summary was also flagged with a yellow “unverified” label.

An example of what the AI-generated summary looked like.

Wikimedia announced that it was going to run the generated summaries experiment on June 2, and was immediately met with dozens of replies from editors who said “very bad idea,” “strongest possible oppose,” Absolutely not,” etc.

“Yes, human editors can introduce reliability and NPOV [neutral point-of-view] issues. But as a collective mass, it evens out into a beautiful corpus,” one editor said. “With Simple Article Summaries, you propose giving one singular editor with known reliability and NPOV issues a platform at the very top of any given article, whilst giving zero editorial control to others. It reinforces the idea that Wikipedia cannot be relied on, destroying a decade of policy work. It reinforces the belief that unsourced, charged content can be added, because this platforms it. I don’t think I would feel comfortable contributing to an encyclopedia like this. No other community has mastered collaboration to such a wondrous extent, and this would throw that away.”

A day later, Wikimedia announced that it would pause the launch of the experiment, but indicated that it’s still interested in AI-generated summaries.

“The Wikimedia Foundation has been exploring ways to make Wikipedia and other Wikimedia projects more accessible to readers globally,” a Wikimedia Foundation spokesperson told me in an email. “This two-week, opt-in experiment was focused on making complex Wikipedia articles more accessible to people with different reading levels. For the purposes of this experiment, the summaries were generated by an open-weight Aya model by Cohere. It was meant to gauge interest in a feature like this, and to help us think about the right kind of community moderation systems to ensure humans remain central to deciding what information is shown on Wikipedia.”

“It is common to receive a variety of feedback from volunteers, and we incorporate it in our decisions, and sometimes change course,” the Wikimedia Foundation spokesperson added. “We welcome such thoughtful feedback — this is what continues to make Wikipedia a truly collaborative platform of human knowledge.”

“Reading through the comments, it’s clear we could have done a better job introducing this idea and opening up the conversation here on VPT back in March,” a Wikimedia Foundation project manager said. VPT, or “village pump technical,” is where The Wikimedia Foundation and the community discuss technical aspects of the platform. “As internet usage changes over time, we are trying to discover new ways to help new generations learn from Wikipedia to sustain our movement into the future. In consequence, we need to figure out how we can experiment in safe ways that are appropriate for readers and the Wikimedia community. Looking back, we realize the next step with this message should have been to provide more of that context for you all and to make the space for folks to engage further.”

The project manager also said that “Bringing generative AI into the Wikipedia reading experience is a serious set of decisions, with important implications, and we intend to treat it as such, and that “We do not have any plans for bringing a summary feature to the wikis without editor involvement. An editor moderation workflow is required under any circumstances, both for this idea, as well as any future idea around AI summarized or adapted content.”

And user backlash. Seriously, wtf?

Who at Wikimedia is so out of touch that they thought that this was a good idea? They need to be replaced.

Same person who saw most American adults have a 6th grade reading level or lower?

Honestly that’s the reason I thought it was a good idea at least. Might actually give them a place to start learning from and improve.

People with low reading level deserve the same attention to detail and veracity as the rest of us.

Those Americans with a 6th grade reading level or less are precisely the people who shouldn’t be reading AI summaries. They’ll lack the critical thinking and reading skills to catch on to garbage.

Simple Wikipedia already exists and is great.

Problem is they can’t read Wikipedia articles in the first place. A lot of it, in particular anything STEM, is higher level reading.

What you’re advocating for is the same as dropping off a physics textbook at an elementary school.

Thats why I mentioned Simple Wikipedia.

This is far more readable that what an AI generated version of the article would make.

Didn’t know that exists, and that needs more marketing. I literally have a “Daily Wikipedia Article” thing and never came across it. And maybe a different name, like Simplified Wikipedia, because I thought you meant something different.

Yeah - tbh the name sucks. I hate recommending it to students, because it feels like I’m calling them dumb.

But yes 100%. Instead of doing dumb AI shit, they should be advertising what they already have.

Wikipedia Simple has fewer articles than regular Wikipedia.

And how do you plan to convince editors to add more articles to Wikipedia Simple?

If someone is going to Wikipedia specifically looking for information in a STEM field, then an AI summary isn’t going to help them. Odds are they can also read, because they’re looking up STEM topics.

Also, is Wikipedia not available around the world, or you just think only Americans can’t read? Inflammatory just for the sake of being inflammatory I’m guessing. Shit troll job.

I thought the AI thing was going to be rolled out only in the USA?

I think that’s not possible. Wikipedia collects as little user data as possible, and providing a different UX in different countries sounds like it would already be too intrusive in that regard.

Why the hell would we need AI summaries of a wikipedia article? The top of the article is explicitly the summary of the rest of the article.

thank you

A page detailing the the AI-generated summaries project, called “Simple Article Summaries,” explains that it was proposed after a discussion at Wikimedia’s 2024 conference, Wikimania, where “Wikimedians discussed ways that AI/machine-generated remixing of the already created content can be used to make Wikipedia more accessible and easier to learn from.” Editors who participated in the discussion thought that these summaries could improve the learning experience on Wikipedia, where some article summaries can be quite dense and filled with technical jargon, but that AI features needed to be cleared labeled as such and that users needed an easy to way to flag issues with “machine-generated/remixed content once it was published or generated automatically.”

The intent was to make more uniform summaries, since some of them can still be inscrutable.

Relying on a tool notorious for making significant errors isn’t the right way to do it, but it’s a real issue being examined.In thermochemistry, an exothermic reaction is a “reaction for which the overall standard enthalpy change ΔH⚬ is negative.”[1][2] Exothermic reactions usually release heat. The term is often confused with exergonic reaction, which IUPAC defines as “… a reaction for which the overall standard Gibbs energy change ΔG⚬ is negative.”[2] A strongly exothermic reaction will usually also be exergonic because ΔH⚬ makes a major contribution to ΔG⚬. Most of the spectacular chemical reactions that are demonstrated in classrooms are exothermic and exergonic. The opposite is an endothermic reaction, which usually takes up heat and is driven by an entropy increase in the system.

This is a perfectly accurate summary, but it’s not entirely clear and has room for improvement.

I’m guessing they were adding new summaries so that they could clearly label them and not remove the existing ones, not out of a desire to add even more summaries.

Wikimedians discussed ways that AI/machine-generated remixing of the already created content can be used to make Wikipedia more accessible and easier to learn from

The entire mistake right there. Look no further. They saw a solution (LLMs) and started hunting for a problem.

Had they done it the right way round there might have been some useful, though less flashy, outcome. I agree many article summaries are badly written. So why not experiment with an AI that flags those articles for review? Or even just organize a community drive to clean up article summaries?

The questions are rhetorical of course. Like every GenAI peddler they don’t have an interest in the problem they purport to solve, they just want to play with or sell you this shiny toy that pretends really convincingly that it is clever.

Fundamentally, I agree with you.

Because the phrase “Wikipedians discussed ways that AI…” Is ambiguous I tracked down the page being referenced. It could mean they gathered with the intent to discuss that topic, or they discussed it as a result of considering the problem.

The page gives me the impression that it’s not quite “we’re gonna use AI, figure it out”, but more that some people put together a presentation on how they felt AI could be used to address a broad problem, and then they workshopped more focused ways to use it towards that broad target.

It would have been better if they had started with an actual concrete problem, brainstormed solutions, and then gone with one that fit, but they were at least starting with a problem domain that they thought it was a applicable to.

Personally, the problems I’ve run into on Wikipedia are largely low traffic topics where the content is too much like someone copied a textbook into the page, or just awkward grammar and confusing sentences.

This article quickly makes it clear that someone didn’t write it in an encyclopedia style from scratch.Mathematics articles are the most obtuse I come across. I think the Venn diagram of good mathematicians and good science communicators is very close to non-intersecting.

Somebody tried to build a bridge between both groups but they ran into the conundrum that to get to the other side they would first need to get half way to that side, then get half way of the remaining distance, then half way the new remaining distance and so on an infinite number of times, and as the bridge was started from the science communicators side rather than the mathematicians side, they couldn’t figure out a solution and gave up.

Even beyond that, the “complex” language they claim is confusing is the whole point of Wikipedia. Neutral, precise language that describes matters accurately for laymen. There are links to every unusual or complex related subject and even individual words in all the articles.

I find it disturbing that a major share of the userbase is supposedly unable to process the information provided in this format, and needs it dumbed down even further. Wikipedia is already the summarized and simplified version of many topics.

There’s also a “simple english” Wikipedia: simple.wikipedia.org

Ho come on it’s not that simple. Add to that the language barrier. And in general precise language and accuracy are not making knowledge more available to laymen. Laymen don’t have to vocabulary to start with, that’s pretty much the definition of being a layman.

There is definitely value in dumbing down knowledge, that’s the point of education.

Now using AI or pushing guidelines for editors to do it that’s entirely different discussion…

The vocabulary is part of the knowledge. The concept goes with the word, that’s how human brains understand stuff mostly.

You can click on the terms you don’t know to learn about them.

You can click on the terms you don’t know to learn about them.

This is what makes Wikipedia special. Not the fact that it is a giant encyclopedia, but that you can quickly and logically work your way through a complex subject at your pace and level of understanding. Reading about elements but don’t know what a proton is? Guess what, there’s a link right fucking there!

They have that already: simple.wikipedia.org

And what about simple wikipedia?

some article summaries can be quite dense and filled with technical jargon, but that Al features needed to be cleared labeled as such and that users needed an easy to way to flag issues with "machine-generated/remixed content once it was published or generated automatically.

I feel like if they feel that this is an issue generate the summary in the talk page and have the editors refine and approve it before publishing. Alternatively set an expectation that the article summaries are in plain English.

some article summaries can be quite dense

Well yeah, that’s the point of a summary. If I want something in long form, I’ll read the article.

Which is why they’re looking to add a easy to reed short overview.

there’s a summary paragraph at the top of each article which is written by people who have assholes probably. it’s the whole reason to use wikipedia at this point

This was my very first thought as well. The first section of almost every Wikipedia article is already a summary.

Yes, but we didn’t emit nearly enough co2 on that one

Summaries for complex Wikipedia articles would be great, especially for people less knowledgeable of the given topic, but I don’t see why those would have to be AI-generated.

There are also external AI tools that do this just fine.

But imagine these tools generating summaries of summaries.

I mean that’s kinda why there’s simple english is it not?

For English yes, but there’s no equivalent in other languages.

Maybe we could generate those with AI… oh wait, I think I see the problem…

The wikipedia is already the processed food of more complex topics.

the Top section of each wikipedia article is already a summary of the article

Fucking thank you. Yes, experienced editor to add to this: that’s called the lead, and that’s exactly what it exists to do. Readers are not even close to starved for summaries:

- Every single article has one of these. It is at the very beginning, and at most around 600 words for very extensive, multifaceted subjects. 250 to 400 words is generally considered an excellent window to target.

- Even then, the first sentence itself is almost always a definition of the subject, making it a summary unto itself.

- And even then the first paragraph is also its own form of summary in a multi-paragraph lead.

- And even then, the infobox to the right of 99% of articles gives you easily digestible data about the subject in case you only care about raw, important facts (e.g. when a politician was in office, what a country’s flag is, what systems a game was released for, etc.)

- And even then, if you just want a specific subtopic, there’s a table of contents, and we generally try as much as possible (without harming the “linear” reading experience) to make it so that you can intuitively jump straight from the lead to a main section (level 2 header).

- Even then, if you don’t want to click on an article and just instead hover over its wikilink, we provide a summary of fewer than 40 characters so that readers get a broad idea without having to click (e.g. Shoeless Joe Jackson’s is “American baseball player (1887–1951)”).

What’s outrageous here isn’t wanting summaries; it’s that summaries already exist in so many ways, written by the human writers who write the contents of the articles. Not only that, but as a free, editable encyclopedia, these summaries can be changed at any time if editors feel like they no longer do their job somehow.

Yeah this screams “Let’s use AI for the sake of using AI”. If they wanted simpler summaries on complex topics they could just start an initiative to have them added by editors instead of using a wasteful, inaccurate hype machine

Two other editors simply commented, “Yuck.”

What insightful and meaningful discourse.

If they’re high quality editors who consistently put out a lot of edits then yeah, it is meaningful and insightful. Wikipedia exists because of them and only them. If most feel like they do and stop doing all this maintenance for free, then Wikipedia becomes a graffiti wall/ad space and not an encyclopedia.

Thinking the immediate disgust of the people doing all the work for you for free is meaningless is the best way to nose dive.

Also, you literally had to scroll past a very long and insightful comment to get to that.

Also, you literally had to scroll past a very long and insightful comment to get to that.

No I didn’t. It’s in the summary, appropriately enough.

I know one study found that 51% of summaries that AI produced for them contained significant errors. So AI-summaries are bad news for anyone who hopes to be well informed. source https://www.bbc.com/news/articles/c0m17d8827ko

I like that they are listening to their editors, I hope they don’t stop doing that.

The main issue I have as an editor is that there is no straightforward way to retrain the LLM to correct faulty training as directly or revertably as the existing method of editing an article’s wikicode. Already, much of my time updating Wikipedia is spent parsing puffery and removing phrases like “award-winning” or “renowned”, inserted by malicious advertisers trying to use Wikipedia as a free billboard. If a Wikipedia LLM began making subjective claims instead of providing objective facts backed by citations, I would have to teach myself machine learning and get involved with the developers who manage the LLM’s training. That raises the bar for editor technical competency which Wikipedia historically has been striving to lower (e.g. Visual Editor).

“Pause” and not “Stop” is concerning.

Is it just me, or was the addition of AI summaries basically predetermined? The AI panel probably would only be attended by a small portion of editors (introducing selection bias) and it’s unclear how much of the panel was dedicated to simply promoting the concept.

I imagine the backlash comes from a much wider selection of editors.

Wikimedia has too much money, maybe this has started to create rotten tumors inside it.

Yes, throw out the one thing that differentiates you from the unreliable slop.

These summaries are useless anyways because the AI hallucinates like crazy… Even the newest models constantly make up bullshit.

It can’t be relied on for anything, and it’s double work reading the words it shits out and then you still gotta double check it’s not made up crap.

Wrong community, please repost to the community for Onion articles.

Good! I was considering stopping my monthly donation. They better kill the entire “machine-generated” nonsense instead of just pausing, or I will stop my pledge!

Good! I was considering stopping my monthly donation.

Ditto. I don’t want to overreact, but it’s not a good look.

If they have enough money to burn on LLM results, they clearly have enough and I don’t need to keep donating mine.

Same.

I still use Wikipedia monobook, so I had no idea this was a feature.