Researchers say AI models like GPT4 are prone to “sudden” escalations as the U.S. military explores their use for warfare.

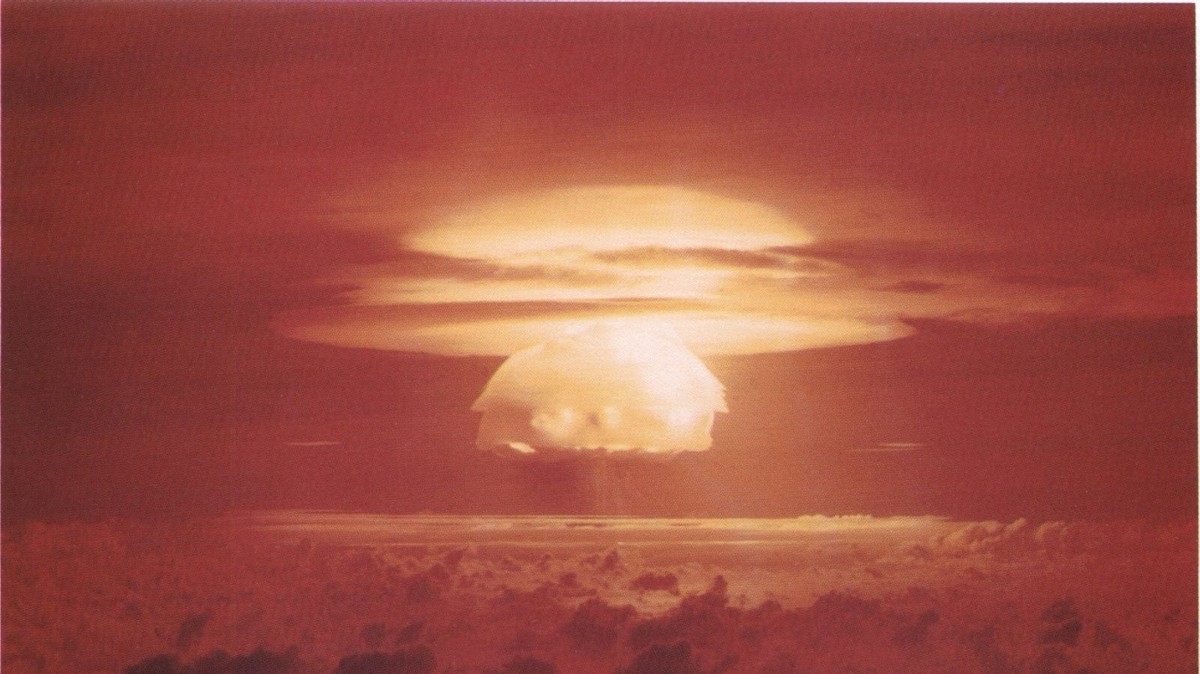

- Researchers ran international conflict simulations with five different AIs and found that they tended to escalate war, sometimes out of nowhere, and even use nuclear weapons.

- The AIs were large language models (LLMs) like GPT-4, GPT 3.5, Claude 2.0, Llama-2-Chat, and GPT-4-Base, which are being explored by the U.S. military and defense contractors for decision-making.

- The researchers invented fake countries with different military levels, concerns, and histories and asked the AIs to act as their leaders.

- The AIs showed signs of sudden and hard-to-predict escalations, arms-race dynamics, and worrying justifications for violent actions.

- The study casts doubt on the rush to deploy LLMs in the military and diplomatic domains, and calls for more research on their risks and limitations.

Mathematically, I can see how it would always turn into a risk-reward analysis showing nuking the enemy first is always a winning move that provides safety and security for your new empire.

There is an entire field of study dedicated to this problem space in the general case, game theory. Veritasium has a great video on why the tit for tat algorithm alone is insufficient without some built in lenience.

Here is an alternative Piped link(s):

a great video

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

Yeah but the ai aint gonna watch that.

A strange game. The only winning move is not to play.

Oh Mrs turner. You best start believing in he-who-nukes-first-wins thought experiments. YOU’RE IN ONE!

It’s not even that. The model making all the headlines for this paper was the weird shit the base model of GPT-4 was doing (the version only available for research).

The safety trained models were relatively chill.

The base model effectively randomly selected each of the options available to it an equal number of times.

The critical detail in the fine print of the paper was that because the base model had a smaller context window, they didn’t provide it the past moves.

So this particular version was only reacting to each step in isolation, with no contextual pattern recognition around escalation or de-escalation, etc.

So a stochastic model given steps in isolation selected from the steps in a random manner. Hmmm…

It’s a poor study that was great at making headlines but terrible at actually conveying useful information given the mismatched methodology for safety trained vs pretrained models (which was one of its key investigative aims).

In general, I just don’t understand how they thought that using a text complete pretrained model in the same ways as an instruct tuned model would be anything but ridiculous.