- cross-posted to:

- sysadmin@lemmy.ml

- sysadmin@lemmy.world

- cross-posted to:

- sysadmin@lemmy.ml

- sysadmin@lemmy.world

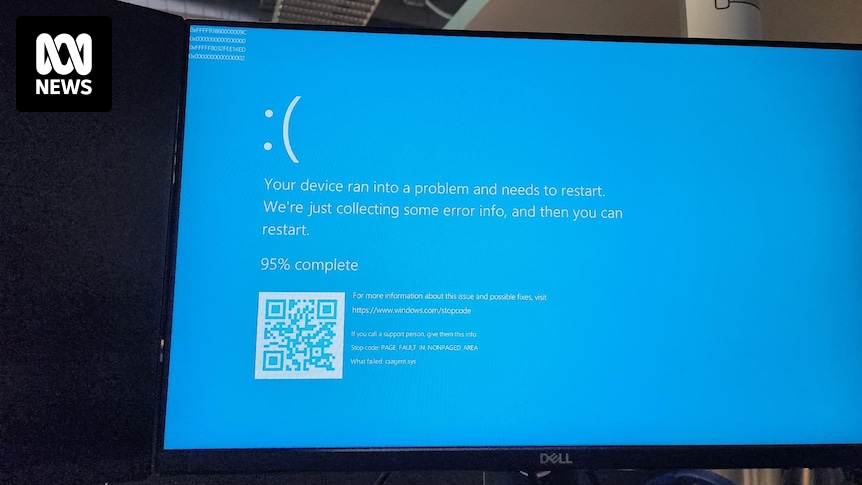

All our servers and company laptops went down at pretty much the same time. Laptops have been bootlooping to blue screen of death. It’s all very exciting, personally, as someone not responsible for fixing it.

Apparently caused by a bad CrowdStrike update.

I think when you are this big you need to roll out any updates slowly. Checking along the way they all is good.

The failure here is much more fundamental than that. This isn’t a “no way we could have found this before we went to prod” issue, this is a “five minutes in the lab would have picked it up” issue. We’re not talking about some kind of “Doesn’t print on Tuesdays” kind of problem that’s hard to reproduce or depends on conditions that are hard to replicate in internal testing, which is normally how this sort of thing escapes containment. In this case the entire repro is “Step 1: Push update to any Windows machine. Step 2: THERE IS NO STEP 2”

There’s absolutely no reason this should ever have affected even one single computer outside of Crowdstrike’s test environment, with or without a staged rollout.

God damn this is worse than I thought… This raises further questions… Was there a NO testing at all??

My guess is they did testing but the build they tested was not the build released to customers. That could have been because of poor deployment and testing practices, or it could have been malicious.

Such software would be a juicy target for bad actors.